There is more misinformation floating around about AI than almost any other topic in tech right now. The myths about AI that non-technical people believe most often come from Hollywood, breathless news headlines, and people who either fear AI or want to sell you something related to it. Some of these AI myths are harmless. Most are not — they either scare people away from tools that could genuinely help them, or they create dangerous overconfidence in tools that have real limitations.

The myths about AI that circulate most widely are not harmless. They either scare people away from tools that could genuinely help them, or they create overconfidence in tools that have real limitations. Both outcomes cost you.

This post tackles the most common misconceptions head-on — in plain language, without hype in either direction.

Myth 1: AI Is Intelligent the Way Humans Are Intelligent

This is the foundational myth that all the others grow from, and it’s worth clearing up first.

When people hear the word “intelligent” in “artificial intelligence,” they imagine something that thinks, reasons, and understands the world the way a human does. It doesn’t. Current AI tools — including the most advanced ones like ChatGPT, Claude, and Gemini — are extraordinarily sophisticated pattern-matching systems. OpenAI, Anthropic, and Google trained them on enormous amounts of text and data, and they have learned to produce outputs that look intelligent because they mirror the patterns of intelligent human communication.

But they don’t understand what they’re saying. Not in the way you understand what you’re reading right now. They don’t have beliefs, intentions, or awareness. They generate the most statistically likely response to your input based on their training. The output often looks like understanding. It isn’t the same thing.

This matters practically because it explains why AI produces confident, well-written answers that are completely wrong. It isn’t lying — it genuinely cannot tell the difference between a correct answer and a plausible-sounding incorrect one, the way a knowledgeable human can.

Myth 2: AI Will Take Over Everything and Replace All Jobs

This one generates more anxiety than any other, and it deserves a careful, honest answer rather than dismissal.

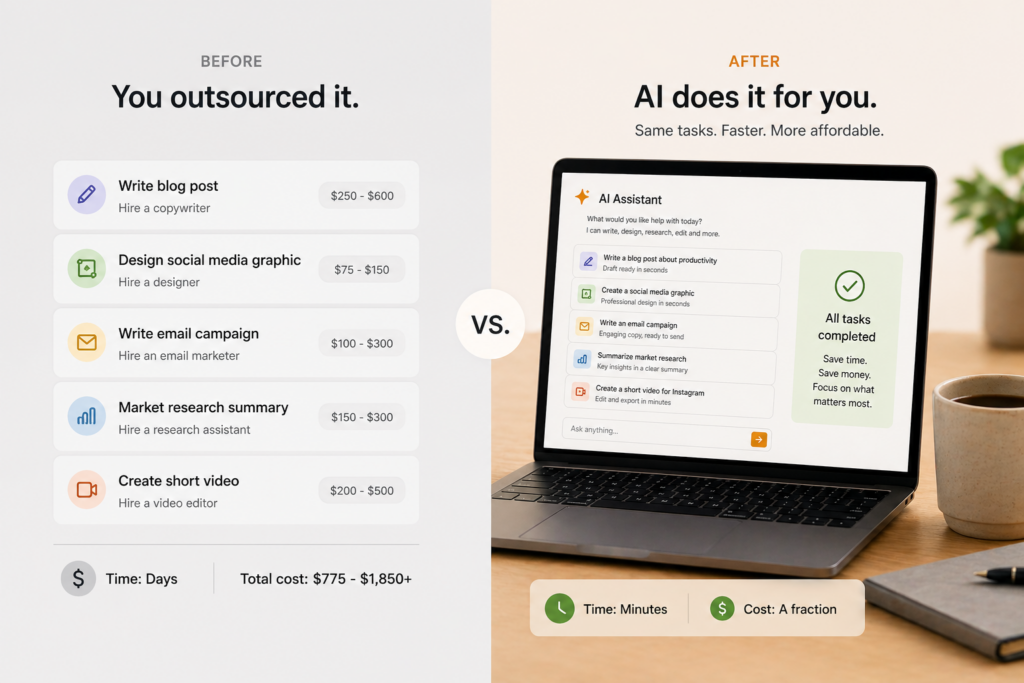

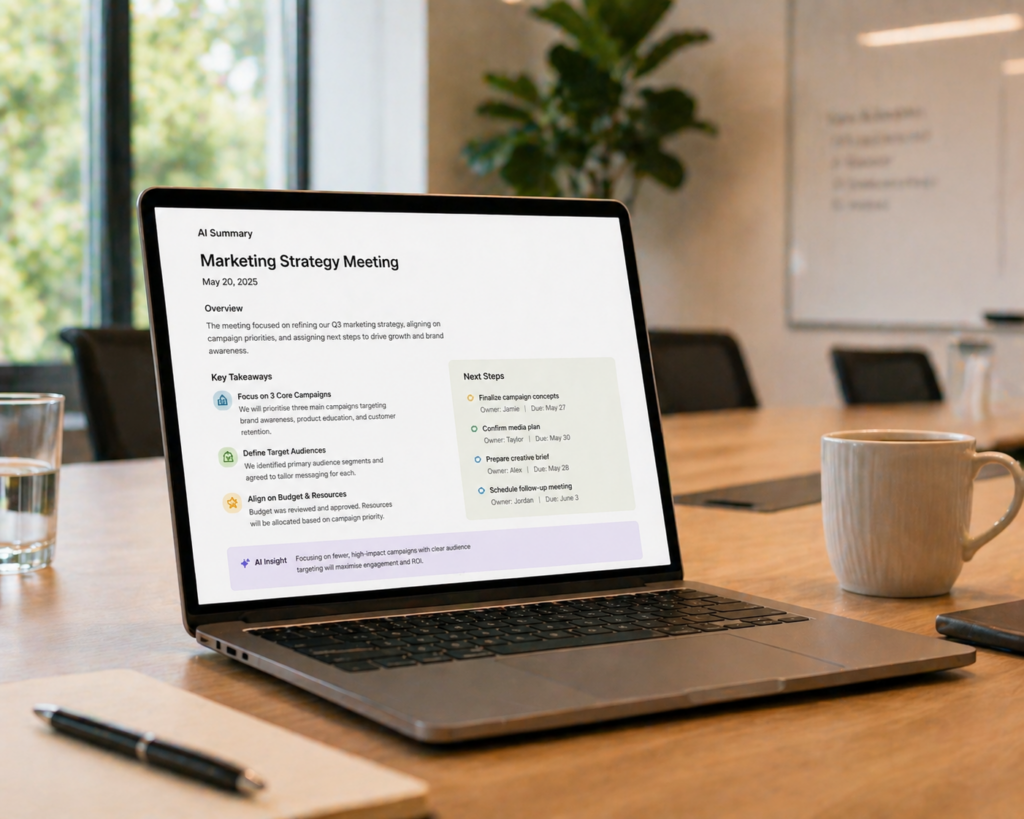

AI already automates specific tasks within jobs — not jobs wholesale. There is a meaningful difference. Writing a first draft, summarising a meeting, answering routine customer questions, and generating a line of code — these are tasks that AI handles well. The broader job that surrounds those tasks — managing relationships, making judgment calls, applying contextual knowledge, taking responsibility for outcomes — remains human work.

History gives useful context here. Previous waves of automation — from the printing press to spreadsheets to the internet — eliminated specific tasks while creating new categories of work that hadn’t existed before. AI is following the same pattern. The jobs most at risk are those built almost entirely around a single automatable task with very little surrounding judgment or human interaction. Most jobs are not structured that way.

The more accurate framing is this: AI will change most jobs, but replace very few of them entirely — at least in the near term. The people most at risk are those who refuse to learn how to work alongside AI. Those whose jobs involve complexity, creativity, or human judgment have far less to worry about.

Myth 3: AI Knows Everything and Is Always Right

This myth is dangerous precisely because AI sounds so confident.

AI tools do not know everything. They were trained on data up to a certain point in time, which means anything that happened after that date is outside their knowledge unless they have web search enabled. Even within their training data, they have gaps, biases, and blind spots — because the data they were trained on has gaps, biases, and blind spots.

More importantly, AI tools hallucinate. This is the term for when an AI generates information that sounds accurate but is factually wrong — a made-up statistic, an incorrect date, a citation that doesn’t exist. Every AI tool does this, including the most advanced ones, and none of them warns you when it happens. The AI presents hallucinated information with the same confident tone it uses for accurate information.

The practical implication is straightforward: never use AI output for anything important without verifying it independently. For writing a casual email or brainstorming ideas, errors are low-stakes. For medical information, legal advice, financial decisions, or anything you’re going to publish or share professionally, always check the facts yourself.

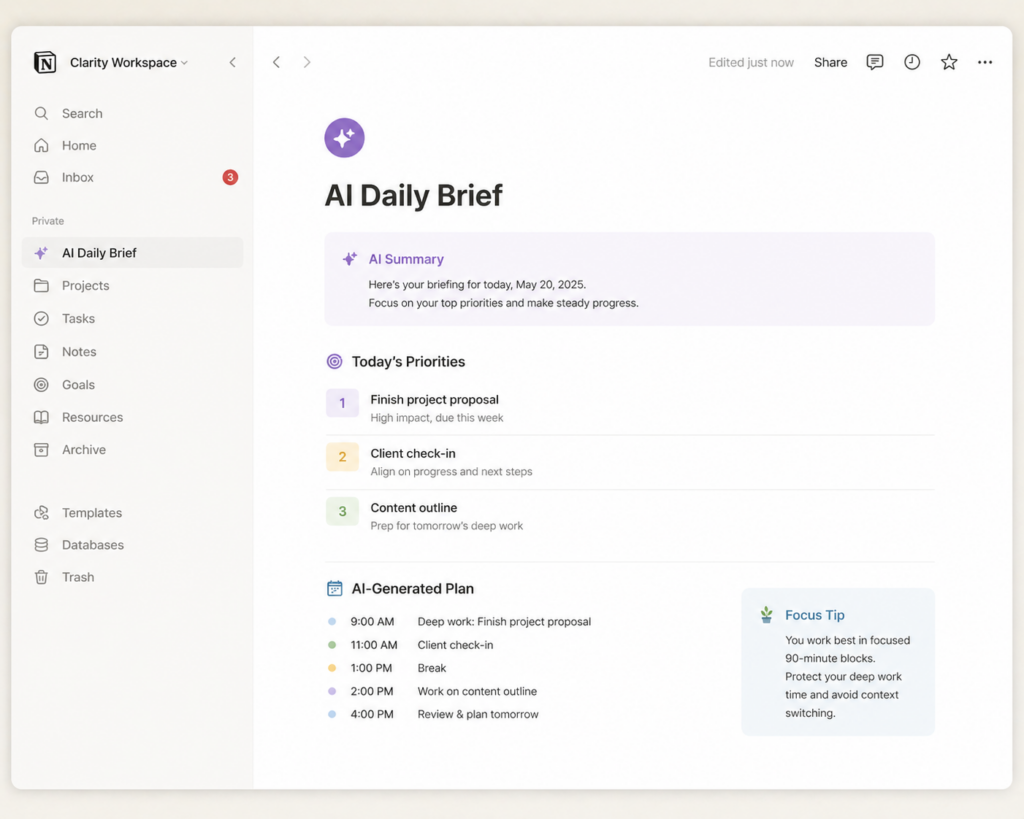

Myth 4: The AI Myth That It’s Only for Technical People

This one stops more people from trying AI than any other myth, and it is the most straightforwardly false.

Using an AI tool requires exactly the same skills as sending a text message. You open an app or a website, you type what you want, and you read the response. There is no installation process to navigate, no command line to learn, no settings to configure before you start. The interface of every major AI tool is a text box. If you can type, you can use it.

The idea that AI is “for technical people” comes from the early days of computing when interacting with software genuinely did require technical knowledge. That era is over. The entire point of modern AI tools is that natural language — the way you already talk and write — is the interface. You don’t need to learn anything new to begin.

Myth 5: Free AI Tools Are Too Limited to Be Useful

Many people assume that the free versions of AI tools are stripped-down demos designed to frustrate you into paying. For the major tools, this simply isn’t true.

The free tiers of ChatGPT, Claude, and Gemini give you access to the same underlying technology that powers the paid versions — with usage limits rather than capability limits. You can draft emails, summarise documents, research topics, get writing feedback, brainstorm ideas, and handle dozens of everyday tasks without spending a penny.

The paid plans matter when you use AI heavily every day, need to work with very long documents, or want access to the most powerful reasoning models for complex tasks. For most people, particularly those just getting started, the free tier is more than sufficient.

This is one of the most persistent AI myths among people who have never tried the tools themselves.

Myth 6: AI Is Listening to You All the Time

Another common AI myth worth addressing is the idea that these tools are passively monitoring you. This fear is understandable given what people know about how smartphones and smart speakers work. But desktop and web-based AI tools like ChatGPT, Claude, and Gemini are not passively listening to you.

These tools process only what you actively type and submit in a conversation. They are not monitoring your microphone, reading your other browser tabs, or tracking what you do outside the app. They receive your message when you send it, process it, and respond. That is the full extent of the interaction.

That said, these companies typically use the text of your conversations to improve their models — unless you opt out, which all three major tools allow you to do in settings. Reading each platform’s privacy policy and adjusting your data settings takes about five minutes and is worth doing.

Myth 7: AI Responses Are Always Biased and Untrustworthy

The more measured truth is that AI responses can be biased — and understanding when and why helps you use them more critically rather than avoiding them entirely.

AI models learn from human-generated text, and human-generated text reflects human biases. The model absorbs those biases to varying degrees. The major AI companies work hard to identify and reduce bias in their outputs, but they haven’t eliminated it, and they’re unlikely to do so completely.

Apply the same approach you’d use with any source of information. Think critically. Consider alternative perspectives. Don’t treat any single source — AI or otherwise — as the final word on complex topics. AI is a tool, not an authority.

Myth 8: If You Use AI, You’re Cheating

This one comes up most often around writing and creative work, and the honest answer is: it depends on the context.

Using AI to write an essay and submitting it as your own original academic work — yes, that violates most academic integrity policies and is dishonest. Using AI to help you draft a work email, refine a business proposal, brainstorm ideas, or improve the clarity of something you’ve written — that is using a tool, no different in principle from using a spell checker, a thesaurus, or asking a colleague to read your draft.

The question is always: does the context require the work to be entirely your own, unaided? In academic and some professional contexts, yes. In most everyday situations, no. A plumber who uses a power drill instead of a hand tool hasn’t cheated. They’ve used a better tool. AI is a better tool for a wide range of tasks, and using it thoughtfully is a skill worth developing.

The Truth Behind These AI Myths

The myths about AI that do the most damage are the ones at both extremes — that AI is a dangerous superintelligence that will replace humans, or that it’s a gimmick too limited to be worth trying. The reality is considerably less dramatic and considerably more useful.

AI tools are powerful, practical, and accessible to anyone. They also have real limitations that are worth understanding. Neither the fear nor the hype serves you as well as simply trying one and forming your own view.