If you haven’t been paying close attention to AI over the past twelve months, you may have missed one of the fastest periods of technological change in recent memory. Understanding how AI has changed recently is not just for tech enthusiasts — the developments of the past year have direct, practical implications for anyone who uses these tools in their daily life or work. This guide covers the most significant shifts in plain English, without the hype.

“If you’re starting from scratch, our introduction to AI covers the basics first.

AI Became Genuinely Useful for Everyday People

A year ago, the most common reaction to trying AI tools was impressed but not convinced. The tools were capable in demos but often frustrating in real use — they made things up confidently, struggled with nuanced instructions, and required significant effort to extract useful output.

That has changed substantially. The current generation of models — GPT-5, Claude 4, Gemini 3 — produces more reliable, more accurate, and more naturally useful responses than their predecessors. Hallucinations still occur, but less frequently and with less confidence. Instruction-following has improved dramatically. The gap between what AI promises and what it delivers in practice has narrowed considerably.

In short, AI has crossed a threshold in the past year from interesting experiment to genuinely useful daily tool for a much wider range of people.

Models Got Significantly More Capable

The raw capability of the leading AI models improved substantially over the past twelve months. On standard benchmarks for reasoning, coding, writing, and complex problem-solving, the current top models outperform their predecessors by meaningful margins.

Crucially, however, benchmark performance and real-world usefulness are not the same thing. The more meaningful improvement for everyday users is that current models handle ambiguous instructions better, maintain coherence over longer conversations, and make fewer confident errors than previous versions. These practical improvements matter more than benchmark scores for most use cases.

Context Windows Expanded Dramatically

One of the most practically significant changes of the past year has been the expansion of context windows — the amount of text an AI can process in a single session.

A year ago, most mainstream models could handle roughly the equivalent of a long article or short document. Today, Claude’s Opus 4.6 offers a one million token context window in beta, and Gemini’s Pro model offers similar capacity. In practical terms, this means you can now paste an entire book, a full codebase, or months of email history into an AI tool and ask questions about all of it at once.

For professionals working with long contracts, large datasets, or extensive research, this development alone represents a significant practical upgrade.

For a plain-English definition of context windows and other technical terms, see our AI glossary.

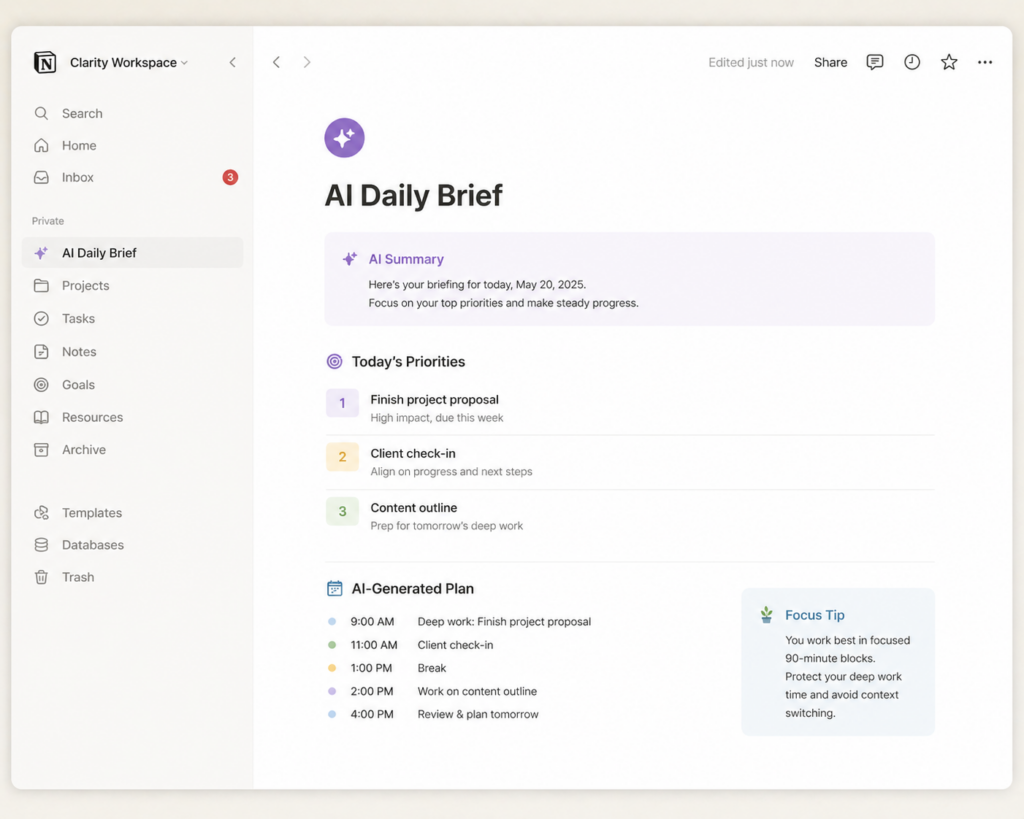

AI Agents Arrived — and Are Still Maturing

Perhaps the most significant structural change in AI over the past year is the emergence of AI agents — systems that don’t just generate text but take actions in the real world. Booking appointments, sending emails, browsing websites, managing files, filling in forms — these are tasks that AI agents can now handle, at least partially.

Claude’s Cowork feature manages files on your desktop. ChatGPT’s Operator mode browses websites and completes multi-step tasks. Gemini integrates directly with Google Workspace to take actions across your documents and calendar.

Nevertheless, it is important to be clear-eyed about where agents currently stand. They work well on structured, predictable tasks. They struggle with complexity, nuance, and anything requiring genuine judgment. Treating an AI agent as fully autonomous for anything consequential remains premature — human review of outcomes is still essential. The technology is genuinely promising, and it is also genuinely unfinished.

Check out our realistic guide to AI capabilities.

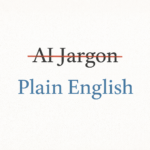

Prices Fell While Capability Increased

A year ago, accessing the most capable AI models required a $20 monthly subscription at minimum. Today, the free tiers of ChatGPT, Claude, and Gemini offer access to genuinely capable models — not stripped-down demos, but real flagship-adjacent models with usage limits.

Meanwhile, the paid tiers have become more competitive. All three major platforms offer their standard paid plan at approximately $20 per month, with enterprise and power-user tiers above that. The cost per unit of AI capability has dropped significantly, meaning more people can access more powerful tools for less money than was possible a year ago.

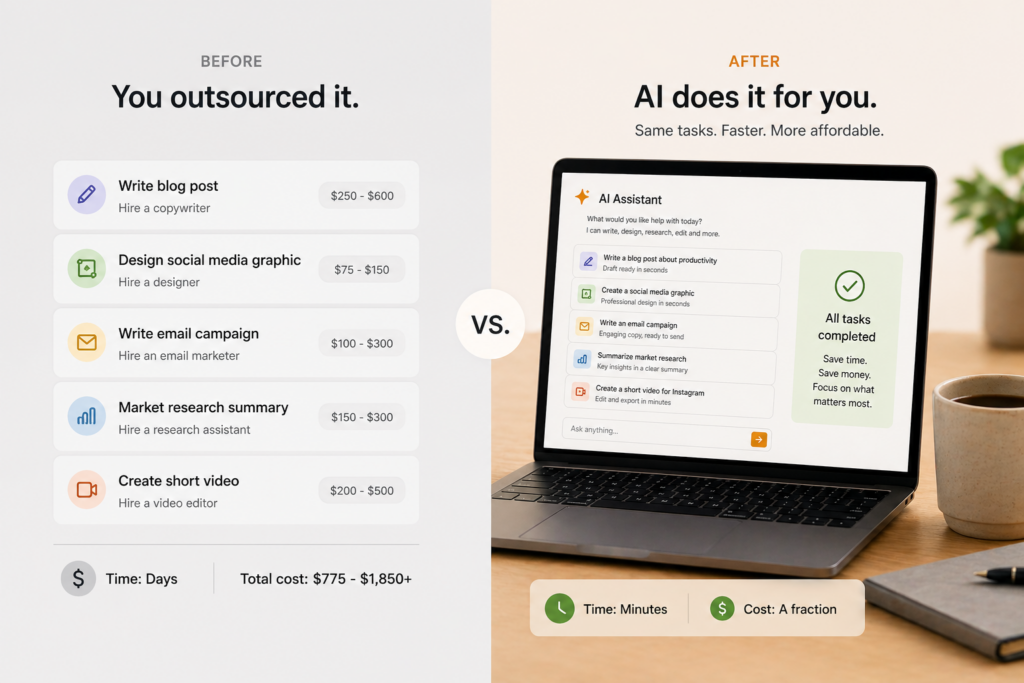

AI Integrated Into Everyday Software

Twelve months ago, using AI typically meant opening a separate tab or app. Today, AI is embedded directly into the software most people already use every day.

Microsoft Copilot is built into Word, Excel, Outlook, and Teams. Gemini is integrated into Gmail, Google Docs, and Google Sheets. Claude has add-ins for Excel and PowerPoint. Grammarly, Notion, Canva, and dozens of other everyday tools have added AI features directly into their interfaces.

Consequently, many people are now using AI without thinking of it as AI — it’s simply a feature of the tool they already use. This integration represents a fundamental shift in how AI reaches everyday users.

What These Changes Mean for You

Understanding how AI has changed recently matters because it changes the calculus of whether and how to engage with these tools.

If you tried AI six months ago and found it underwhelming, it is worth trying again. The tools have improved meaningfully. If you have been using AI regularly but only for simple tasks, the expanded capabilities — particularly around long documents and agent actions — may unlock new use cases you hadn’t considered. And if you haven’t started yet, the combination of improved capability, lower cost, and wider availability makes this a more accessible entry point than at any previous moment.