One of the first questions people ask before trying any new technology is whether it is safe — and when it comes to AI, that question is especially worth answering carefully. Is AI safe to use? The short answer is yes, for most everyday tasks, with some important caveats that every user should understand. This guide walks through the most common privacy concerns about AI tools, what actually happens to your data, and the simple steps you can take to protect yourself.

Is AI Safe to Use for Everyday Tasks?

For the vast majority of everyday tasks — drafting emails, summarising documents, answering questions, brainstorming ideas — AI tools are safe to use. The major platforms, including ChatGPT, Claude, and Gemini, use industry-standard encryption to protect data in transit and at rest. None of them is designed to expose your personal information to other users.

However, safe does not mean consequence-free. Understanding how these platforms handle your data is the foundation of using them responsibly. So before diving into specific concerns, it helps to understand what actually happens when you send a message to an AI tool.

What Happens to Your Data When You Use AI?

When you type a message into an AI tool and hit send, that message travels to the company’s servers, gets processed by the AI model, and a response is generated and sent back to you. The conversation is stored on the company’s servers, at least temporarily.

Most major AI platforms use your conversations to improve their models — unless you explicitly opt out. This means a human reviewer or an automated system may read samples of conversations to assess quality and identify errors. Crucially, this is not unique to AI. Email providers, social media platforms, and search engines have operated similarly for years.

Furthermore, your data is not shared with other users. No one using ChatGPT can see your conversations. No Claude user can access your sessions. The data stays within the platform’s systems, subject to their privacy policy.

The Most Important Privacy Rule for AI Users

The single most important rule for using AI safely is this: never paste sensitive information into a public AI tool.

This includes passwords, bank account numbers, national insurance or social security numbers, confidential business information, client data, medical records, and any other information you would not want stored on a third-party server.

Even if the platform’s privacy practices are entirely above board, entering sensitive data into any third-party service carries risk. Treat AI tools the way you would treat any cloud-based service — useful and generally safe, but not the right place for your most sensitive information.

Does AI Store Your Conversations?

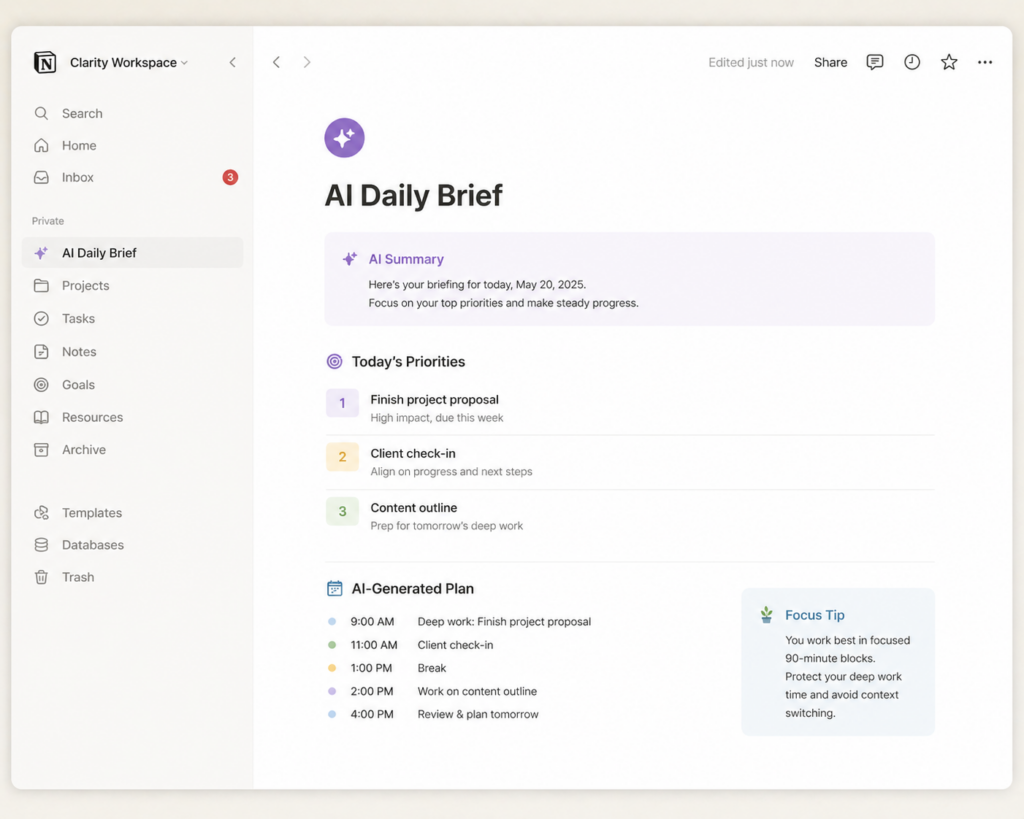

The answer varies by platform and by the settings you choose. By default, most major AI tools store your conversation history. This serves two purposes — it lets you revisit past conversations, and it allows the company to use those conversations for model improvement.

Fortunately, all three major platforms give you control over this. In ChatGPT, you can turn off chat history in Settings to prevent conversations from being saved or used for training. In Claude, you can manage your data preferences through the Privacy settings. If you go for Gemini, your activity controls are managed through your Google account settings.

Taking five minutes to review and adjust these settings is worthwhile for anyone concerned about data retention.

Is AI Safe to Use at Work?

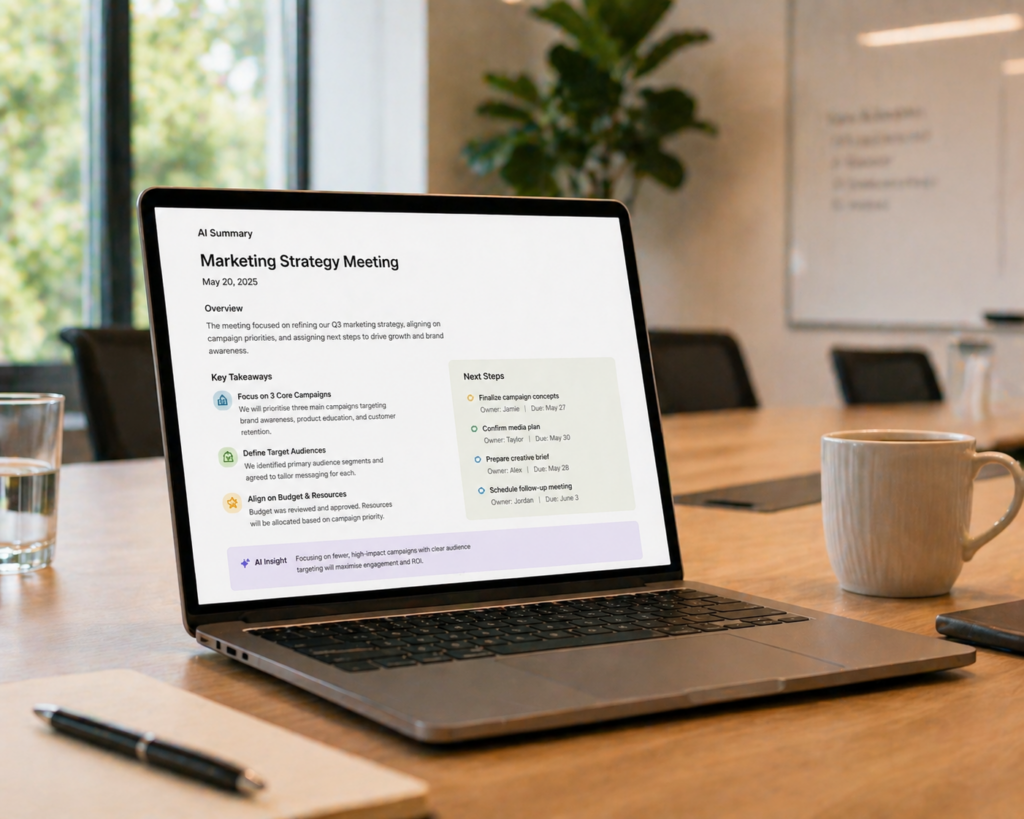

This is where the answer becomes more nuanced. Many companies have specific policies about which AI tools employees can use and what information they can share with them. Before using a consumer AI tool for work tasks, check your company’s policy.

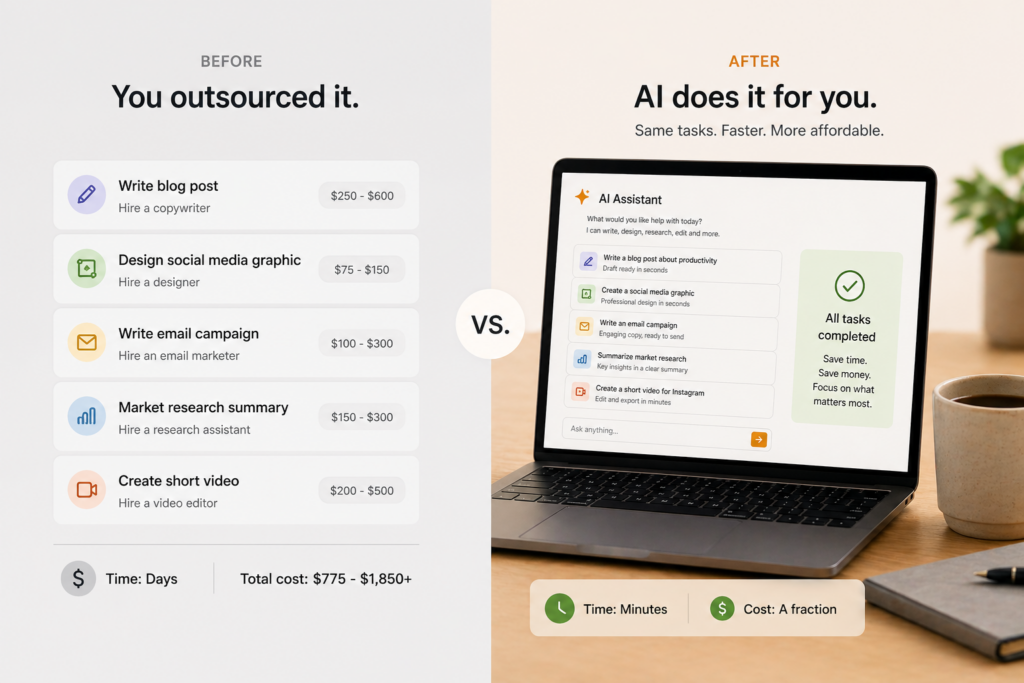

The core concern for businesses is confidentiality. If you paste a client proposal, internal strategy document, or proprietary data into a consumer AI tool, that information may be used to train future models — depending on the platform’s terms. Enterprise versions of these tools — ChatGPT Enterprise, Claude for Work, Gemini for Google Workspace — offer stronger data protection guarantees, including commitments not to train on your data.

If your work involves confidential information, use the enterprise version of whichever tool your company approves, or use AI only for tasks that don’t involve sensitive data.

Can AI Tools Be Hacked?

Any online service can theoretically be breached. The major AI platforms invest heavily in security, and none of the leading tools have suffered significant data breaches to date. Nevertheless, no online service carries zero risk.

The practical implication is the same rule that applies to any online account — use a strong, unique password, enable two-factor authentication where available, and don’t share your account credentials with anyone.

Is AI Safe to Use for Children?

Most major AI tools set a minimum age of thirteen for account creation, in line with standard digital services regulations. However, age verification is limited, and the content AI tools can produce is not always appropriate for younger users.

Parents concerned about children using AI tools should review the platform’s terms of service, use parental controls where available, and have an open conversation with their children about how to use AI responsibly — including the importance of not sharing personal information.

The Practical Safety Checklist

To summarise the key steps for using AI safely:

Never enter passwords, financial details, or sensitive personal information into any AI tool. Review the privacy settings on whichever platform you use and adjust data retention preferences to suit your comfort level. Check your employer’s AI policy before using consumer tools for work tasks. Use strong, unique passwords and enable two-factor authentication on your AI accounts. For sensitive professional use, choose an enterprise-grade tool with appropriate data protection guarantees.

Following these steps means you can use AI tools confidently for the vast majority of tasks they’re suited for — without exposing yourself to unnecessary risk.